What we learned building a 100M-document search engine

Every experiment at 100 million documents costs a full day. We built a retrieval system at this scale and learned that iteration speed matters more than any single parameter.

Most retrieval posts start with a benchmark table. We are starting somewhere else.

We built a retrieval system over 100 million web documents, covering corpus preparation, embedding generation, distributed ingestion, and evaluation across multiple query types and efficient retrieval strategies. At this scale, we used approximate nearest neighbor (ANN) search and tested both pure ANN and hybrid retrieval, combining embedding vectors with keyword matching. Those experiments produced results worth sharing, which we cover in Parts 2 and 3. Along the way, though, we kept encountering something that rarely appears in research writeups: the operational cost of learning.

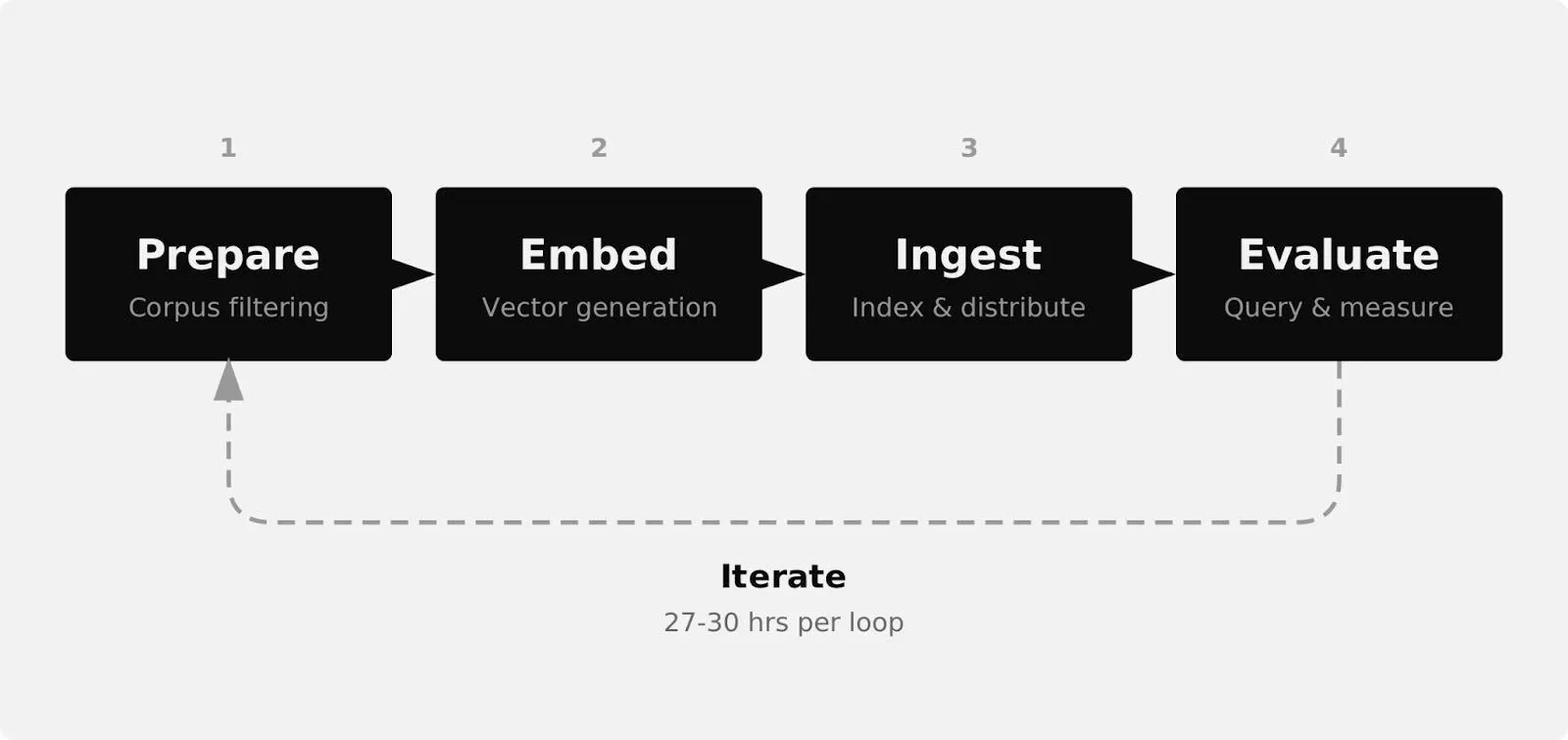

Every configuration choice had a feedback cycle. Change an ANN index parameter? Reindex the full corpus. Want to compare embedding representations? Reindex again. Discover that your preprocessing pipeline lets through too much noise? Start over. At 100 million documents, "start over" is not a casual decision. It is a 27-to-30-hour commitment with no checkpointing.

This post is about that side of the work: the pipeline, the iteration loops, and the failure modes that shaped what we measured.

The first full rebuild is a gut-check. We did not yet feel what "100 million documents" means. We remember hovering over the deploy button, knowing that once it started there was no easy way back, then watching it run for 27 to 30 hours with no checkpointing. When it failed, the frustration was immediate: we were back at zero.

We structured the project as four stages:

On paper, this is clean. In practice, decisions in stage 1 showed up as failures in stage 4, and the only way to confirm that was to run the full loop again. The gap between the diagram and the reality is where the real engineering happened.

The corpus comes from Common Crawl 's CC-MAIN-2025-43 snapshot (October 2025). The raw crawl contains billions of pages; we filtered it to 100 million documents for experimentation.

Our choices about minimum content length, deduplication, and noise removal affected both embedding quality and retrieval behavior. We did not realize how much until we started evaluating.

When we first ran queries, the results were embarrassingly bad. We assumed the retrieval layer was to blame, tweaked parameters, rewrote queries, and spent hours inspecting results. Lots of subjective judgment, staring at outputs and asking, does this actually answer the query?

Then a pattern emerged: many top hits were not real documents at all. "Page Not Found," login screens, thin pages with no content. The corpus was polluted. The retrieval layer was returning the closest vectors it could find, and those vectors were junk.

Once we applied a minimum content-length filter, quality jumped. Not because the retrieval system got better, but because the source material got cleaner.

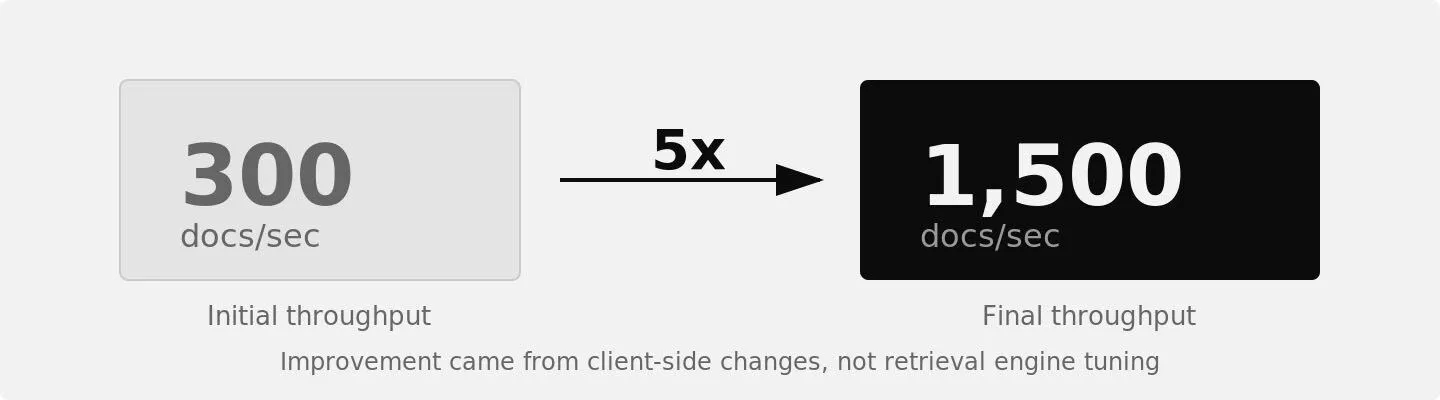

Our first naive ingestion pass topped out at 300 documents per second: 3 to 4 days to feed the corpus. We did not know whether 300 was good or bad.

We experimented aggressively: adjusting batching logic, increasing threads, running the feeding chain from inside AWS to minimize roundtrip network latency. Eventually we pushed ingestion to around 1,500 docs/sec. We could likely have gone further, but at some point the effort stopped being proportional to the performance gain. It was time to move on and run the experiments.

That 5x feed throughput improvement came almost entirely from client-side changes, not from tuning the retrieval engine. At scale, the feeding client can easily become a bottleneck, and it tends to get less attention than the retrieval layer.

If it takes a day to feed your corpus, every configuration experiment carries a day of overhead before you even know whether it worked.

With a single indexing node, a full reindex took 27 to 30.5 hours in our setup. Runtime depends on the number of indexing nodes and ingestion parallelism, so this was not a fixed limit. But for any given configuration, changing an ANN parameter or embedding representation still required a full rebuild.

In small-scale benchmarks, you can try 50 configurations in an afternoon. At this scale, every experiment costs wall-clock hours, compute, and the opportunity cost of not running something else.

ANN indexing at this scale was new territory for us. Ideally, we would have changed one parameter at a time, rebuilt, and carefully analyzed how the metrics responded. But when each rebuild takes roughly 30 hours plus another day of evaluation, that ideal becomes impractical.

The iteration loop stretched into multiple days per experiment. More than once, we changed two settings at once, knowing it compromised the scientific cleanliness of the experiment. It saved time but introduced uncertainty: when results moved, we could not always say why. That tradeoff was one of the most uncomfortable parts of this work.

We came away thinking the reindex-evaluate loop speed may be one of the most underappreciated factors in retrieval quality. Teams do not lack good ideas. The iteration cost of testing those ideas at production scale is so high that most never get tried.

A decision that seemed minor during corpus preparation would surface as a measurable quality issue during evaluation. Short, empty, or near-duplicate documents consume embedding compute, take up index space, and appear in results as irrelevant hits. At scale, noise in your corpus becomes noise in your evaluation. If your results look bad, the fix might not be in the retrieval layer. It might be three stages upstream.

We expected the experiments to converge on a 'best' configuration: one set of ANN parameters, one retrieval mode, one tuning that would work across the board. That hypothesis turned out to be wrong.

Different query types favor different retrieval modes and parameters. Human queries (short, ambiguous, underspecified) behave differently than agentic queries (longer, precise, semantically aligned). The ANN parameters that work for one workload are not necessarily right for another.

You cannot tune a retrieval system once and walk away. The configuration that works today may not work for next quarter's workload. Your system either supports fast iteration on configuration and evaluation, or it forces you to commit to settings you cannot easily revisit.

We did not have enough experience to know what "normal" looked like at this scale. Even our more senior colleagues were surprised that certain parameters needed tuning well outside their usual ranges to maintain recall at this corpus size. We dig into the specifics in Part 2.

The benchmarking community focuses on query-time metrics: latency, recall, throughput. Those matter, but they are not the full picture.

The offline costs determine how quickly you can improve quality: reindex time, embedding cost, the pain of discovering a preprocessing mistake late in the pipeline. If your iteration loop is measured in days rather than hours, your system will spend most of its life running a suboptimal configuration.

This is one of the reasons we are building Hornet the way we are. Retrieval infrastructure needs to be workload-aware and iteration-friendly, not just fast at query time.

The experiments reinforced that conviction. Not because everything worked on the first try, but precisely because it did not.

If we were to start over, we would invest earlier in corpus quality checks and faster iteration loops. The parameter space at this scale is enormous, and most decisions are guided more by experience than certainty. The quality of your system is limited by how fast you can afford to experiment. Designing for faster learning matters more than chasing a single optimal configuration.

Part 2 covers the experimental results on ANN tuning: how index configuration affects recall, latency, and convergence at scale, benchmarked against brute-force search on real human queries from the MIMICS dataset .

Part 3 examines hybrid search behavior across different query regimes, including agentic query patterns, and what that means for evaluation design.

We're building Hornet for teams working on this problem. To be notified about new posts, benchmarks, and early product notes,