The context window is not your database

Context windows keep growing, but size alone degrades reasoning. We explain why treating the context window as a database breaks under agentic workloads, and why retrieval still matters.

Someone on your team has probably said: "Why don't we just put everything in the context window?" Context window limits have grown from 4K tokens to over 1M. If the documents fit, why bother with a retrieval pipeline?

We build retrieval infrastructure for agents at Hornet, so we hear this a lot. And for plenty of use cases, long context is the right call. If your knowledge base is small and your query volume is low, stuffing it all in the window is simpler and often more accurate than chunked retrieval [1] [2] .

But big problems start when teams treat "it fits in the context window" as a retrieval strategy. Length alone degrades reasoning, even when the model can find the right evidence. Real documents full of near-duplicate content make it worse. Agent loops multiply both problems across every turn. These compound fast.

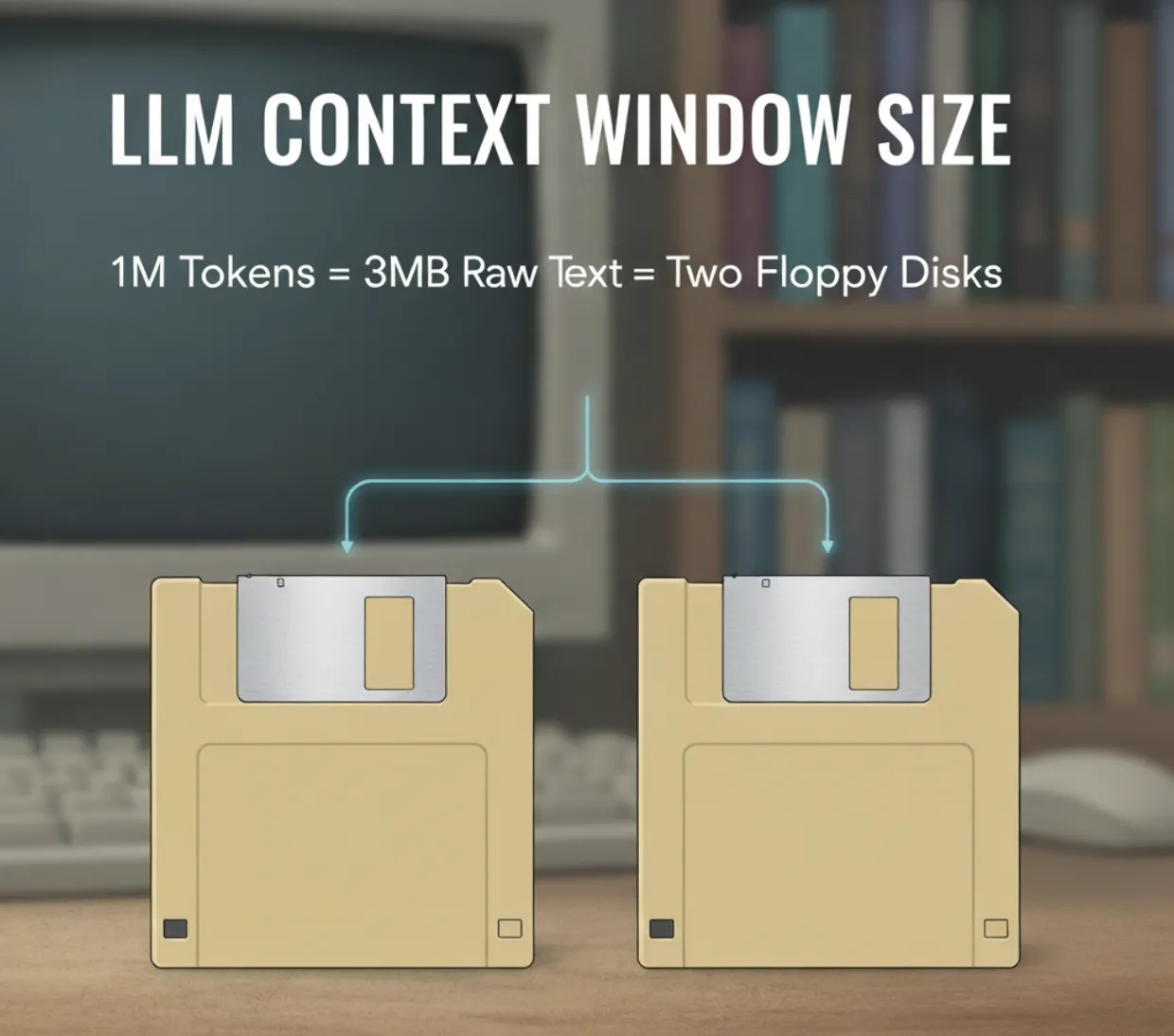

Our CEO Jo Kristian likes to put it this way: a million tokens is about three megabytes of text. That's two floppy discs from the 80s. If your data fits on two floppies, you probably don't need retrieval. Stuff it in the context, ship the feature, move on.

The catch is what happens when agents enter the picture. Agents don't make one call. They run in turns, and every turn carries the full context forward. A ten-turn session over a million-token context isn't one million tokens of input; it's ten million. At $6 per million input tokens (Sonnet 4.6 long-context pricing), that's $60 for a single agent run. A study by Xu et al. measured RAG costing roughly 1,250x less per query than equivalent long-context approaches [3] . Once agents are looping, the gap compounds on every turn, and so does the cost.

The economics matter, but the accuracy problem is what keeps us up at night. My colleague Lester Solbakken coined the phrase "mutually assured distraction" [4] for the failure mode we kept seeing: plausible but incorrect evidence doesn't just sit in the window doing nothing. It gets promoted into the model's reasoning. Think about finance or legal documents, templated text where a single date or dollar amount is the only difference between two paragraphs. The model picks the wrong one, builds a confident answer on top of it, and nothing in the output tells you what happened.

Frontier models have mostly solved the positional recall problem (the old "lost in the middle" effect [5] ). But distractors are different from needles, and the problem compounds in agent loops. Every tool call appends its output to the context. Ten iterations into a research task, the window is packed with intermediate results, abandoned hypotheses, and superseded information. The stuff the agent actually needs for its next decision might be 2% of what's in the window.

We're not the only ones seeing this. LangChain's 2026 survey of 1,300+ practitioners put context management near the top of the pain list for teams running agents in production [6] . Google's ADK codified "scope by default," where every model call sees minimum context [7] . Fowler's team at Thoughtworks wrote about how the natural instinct when agents misbehave, giving them more context, actually makes things worse [8] . Everyone arrived at the same place we did: don't put everything in the window.

Here's the pattern we see: A team starts with "put everything in context" because it's fast and it works in demos. They go to production and hit costs that scale per query, latency that grows with context size, and accuracy problems they can't consistently reproduce because the failures are statistical. They realize they need to add retrieval.

A retrieval layer narrows millions of candidates down to the handful that matter for a given query. Stable costs, access controls, provenance, and failures you can actually debug. Context windows will keep growing, and models will keep getting better at using them. But getting the right information into the context, and only the right information, is the hardest part of building reliable agents. That's retrieval. And it's worth building properly.

We're building Hornet for teams working on this problem. To be notified about new posts, benchmarks, and early product notes,