Deep research is a retrieval problem

Why retrieval is a dominant bottleneck in BrowseComp-Plus.

Part 1 of a series on agentic retrieval.

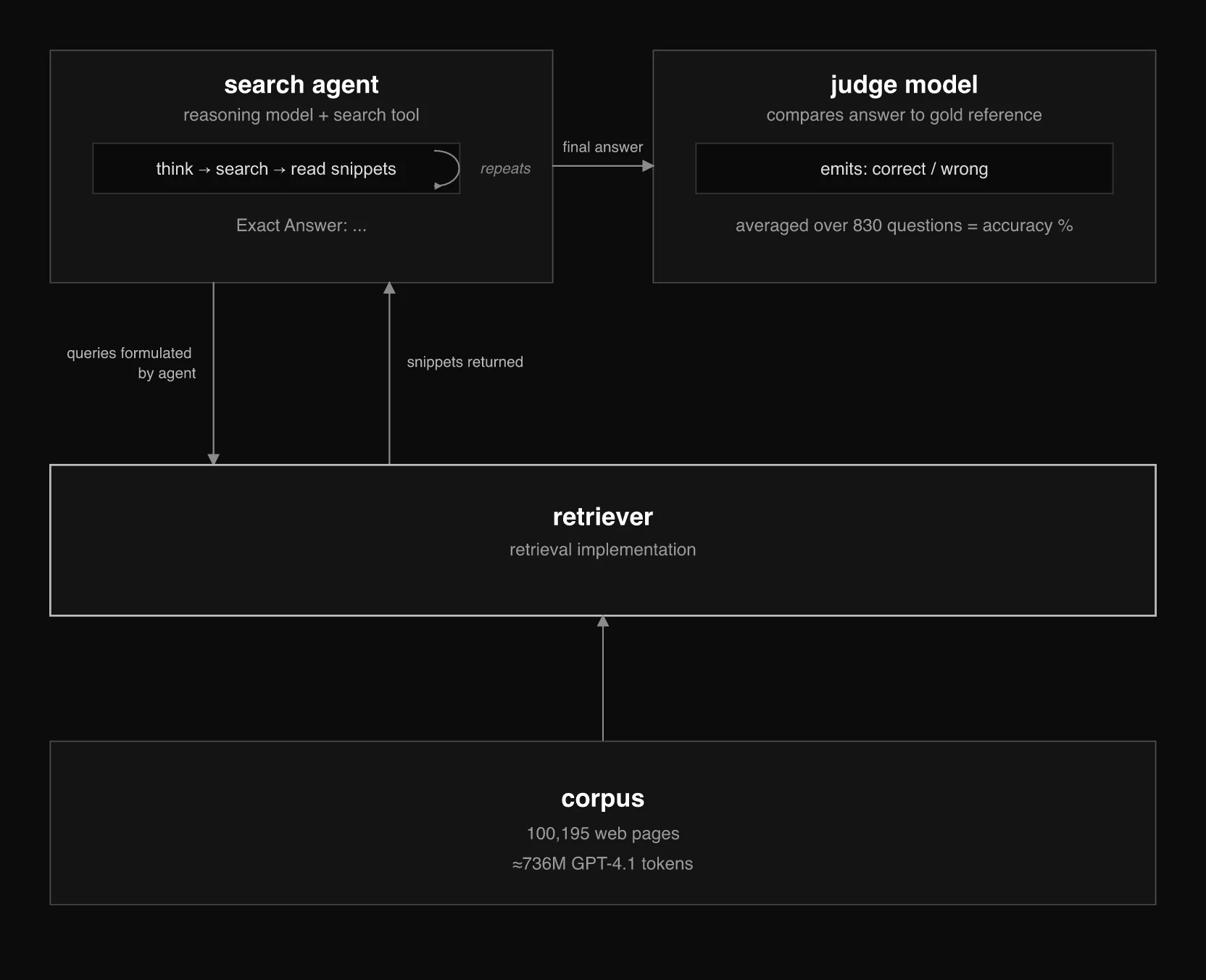

BrowseComp-Plus is a multi-hop question answering benchmark for agents. Questions are designed to require evidence from multiple documents across a fixed corpus of 100,195 web pages, about 736 million GPT-4.1 tokens in total. The benchmark also includes human-annotated evidence documents for each answer. Its headline number looks like a measure of end-to-end reasoning. A closer look suggests that retrieval is a dominant bottleneck in the benchmark.

The clearest evidence comes from the paper's oracle retrieval setting, where the agent is prompted with all labeled positive documents up front instead of having to find them itself. This isolates what happens when retrieval is effectively perfect. In that setup, GPT-4.1 answers 93.49% of questions correctly. When the same model has to search the corpus with an untuned weak BM25 baseline, accuracy falls to 14.58%. That gap is hard to explain without retrieval playing a major role.

The public leaderboard reports one number: what percentage of 830 questions the agent answered correctly. But that number folds together several distinct things: query formulation, retrieval quality, context limits, and answer extraction. To understand what BrowseComp-Plus is really measuring, you have to separate those layers.

BrowseComp-Plus as a retrieval pipeline: the agent searches a fixed corpus through a retriever, and a judge scores the final answer.

BrowseComp-Plus is not a deep-research benchmark in the sense of producing long reports or summaries. The answers are short and verifiable, often just a person's name, a date, or a currency amount. What makes the task difficult is that the questions are densely multi-hop and usually require evidence from multiple documents across the fixed corpus. The agent has to search, accumulate evidence, and reason over what it finds across many search turns.

Human annotators constructed the questions so they cannot be answered from any single page. A representative example where the answer is a name:

Could you provide the name of the individual who: as of December 2023, was the coordinator of a research group founded in 2009; co-edited a book published in 2018 by Routledge; had a co-editor who was a keynote speaker at a conference in 2019; served as the convenor of a panel before 2020; published an article in 2012; and completed their PhD on the writings of an English writer.

The corpus was built deliberately to make this hard. Human annotators verified which documents are needed to answer each question and labeled exactly which text spans contain the answer. Each question has, on average, 6.1 evidence documents, 2.9 gold documents, and 76 hard negatives. The evidence documents are needed to answer the question. The gold documents are a stricter subset that semantically contain the reference answer string.

Long context does not remove the retrieval problem. A 128k context window holds only about 0.017% of the corpus (736M tokens); even a 1M-token window holds only about 0.14%. We wrote about the broader version of this argument in The context window is not your database.

The agent does not get the full text of the document: the standard evaluation protocol truncates snippets to 512 tokens. The answer may be in the retrieved document but past the visible window, which is a failure mode independent of document-level retrieval quality.

The combination of long multi-hop questions, large, noisy corpus, and truncated snippets makes this one of the most demanding benchmarks for search-augmented reasoning. The fixed corpus and question set also make it an excellent testbed: you can swap retrievers, tune parameters, and change agent behavior while getting a much clearer view of what moved and why.

The oracle result is the clearest signal, but it is not the only one. Two additional results point in the same direction.

First, the same pattern appears with weaker models. Using Qwen3-32B as the LLM, the oracle experiment reaches 83% accuracy. But when the model has to use a search tool to find its own evidence, accuracy falls below 5%. That gap does not mean reasoning is irrelevant. It does show that retrieval quality and search behavior are major constraints in BrowseComp-Plus.

Second, the one-shot retrieval results point in the same direction. Weak lexical retrieval leaves a large share of the evidence undiscovered, while stronger embedding-based retrieval brings much more of it into view. That does not settle every question about agent-loop performance, but it does show that retrieval quality strongly shapes what evidence the model gets to reason over.

The leaderboard shows one number, but it compresses three distinct evaluation layers.

One-shot retrieval quality measures what the retriever returns for the full question as a single query, scored against the human-annotated evidence documents. This lets us compute standard IR metrics such as Recall@5, Recall@100, Recall@1000, and nDCG@10.

Session-level recall measures the cumulative set of evidence documents retrieved across all queries the agent makes. It shows how much of the needed evidence the agent actually retrieves. A retriever that looks strong in one-shot evaluation can still perform poorly if the agent formulates weak queries.

End-to-end judged accuracy is the headline leaderboard number. It measures whether the system ultimately returns the correct answer. A system can retrieve the right documents and still fail: the decisive text may fall outside the 512-token snippet window, or the reasoning model may fail to extract it. These are different failure modes, but the leaderboard collapses them into a single score.

Each layer isolates a different part of the system. The headline number alone does not tell you what is actually constraining performance.

BrowseComp-Plus is useful because it breaks a vague idea of "deep research" into concrete layers: query formulation, retrieval, context exposure, and answer extraction. Performance depends on all of them, but retrieval is central because it determines what evidence the model ever gets a chance to use.

Part 2 looks at what the agent's actual search behavior looks like: We analyze 19,279 search calls across 830 questions, phrase search in 98% of sessions, and queries that narrow as evidence accumulates.

Part 3 examines why BM25 looks weak in the one-shot retrieval table but considerably stronger inside the agent loop, and what that means for how you evaluate retrieval.

Part 4 looks at what happens when you put the best retrieval strategies together.

We're building Hornet for teams working on agentic retrieval. To be notified about new posts, benchmarks, and early product notes,